-

DEFROST: Detecting Excess in Faraday Rotation thrOugh Sophisticated analysis Techniques

Vacca, V., Hutschenreuter, S., Cabriolu, A., Enßlin, T.A., Roth, J., Reineke, M., Frank, P., Govoni, F., Murgia, M., Fenu, G.

Astronomy & Astrophysics (accepted), 2026

-

The radial component of the local Galactic magnetic field in 3D

McCallum, L., Frank, P., Hutschenreuter, S., Benjamin, R., Booth, R.A., Clark, S.E., Haverkorn, M., Hill, A.S., Mertsch, P., Ordog, A., Saydjari, A.K., West, J.

MNRAS, 2026

-

Bayesian multiband imaging of SN1987A in the Large Magellanic Cloud with SRG/eROSITA

Eberle, V., Guardiani, M., Westerkamp, M., Frank, P., et al.

Astronomy & Astrophysics 2026, 706, A229

-

A Bayesian method for air-shower reconstruction using Information Field Theory

Terveer, K., Bouma, S., Buitink, S., et al. incl. Frank, P.

Astroparticle Physics 2026, 179, 103241

-

The influence of the 3D Galactic gas structure on cosmic-ray transport and gamma-ray emission

Ramírez, A., Edenhofer, G., Enßlin, T.A., Frank, P., et al.

Astroparticle Physics 2026, 174, 103151

-

J-UBIK: The JAX-accelerated Universal Bayesian Imaging Kit

Eberle, V., Guardiani, M., Westerkamp, M., Frank, P., Rüstig, J., Stadler, J., Enßlin, T.A.

Journal of Open Source Software, 11(120), 7768, 2026

-

Latent-space Field Tension for Astrophysical Component Detection

Guardiani, M., Eberle, V., Westerkamp, M., Rüstig, J., Frank, P., Enßlin, T.A.

Astronomy & Astrophysics 2025, 703, A203

-

Spatially Coherent 3D Distributions of HI and CO in the Milky Way

Söding, L., Edenhofer, G., Enßlin, T.A., Frank, P., et al.

Astronomy & Astrophysics 2025, 693, A139

-

Towards a Field Based Bayesian Evidence Inference from Nested Sampling Data

Westerkamp, M., Roth, J., Frank, P., Handley, W., Enßlin, T.A.

Entropy 2024, 26(11), 930

-

fast-resolve: Fast Bayesian Radio Interferometric Imaging

Roth, J., Frank, P., Bester, H.L., Smirnov, O.M., et al.

Astronomy & Astrophysics 2024, 690, A387

-

Disentangling the Faraday rotation sky

Hutschenreuter, S., Haverkorn, M., Frank, P., et al.

Astronomy & Astrophysics 2024, 690, A314

-

Non-parametric Bayesian reconstruction of Galactic magnetic fields using Information Field Theory

Tsouros, A., Bendre, A.B., Edenhofer, G., Enßlin, T.A., Frank, P., et al.

Astronomy & Astrophysics 2024, 690, A102

-

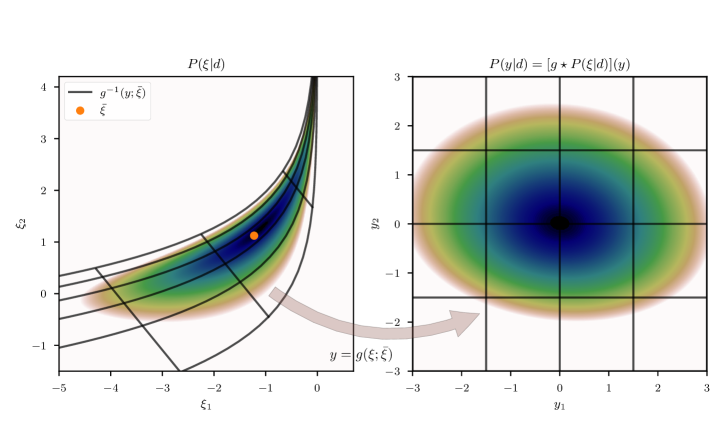

Re-Envisioning Numerical Information Field Theory (NIFTy.re): A Library for Gaussian Processes and Variational Inference

Edenhofer, G., Frank, P., Roth, J., Leike, R.H., et al.

Journal of Open Source Software, 9(98), 6593, 2024

-

A Parsec-Scale Galactic 3D Dust Map out to 1.25 kpc from the Sun

Edenhofer, G., Zucker, C., Frank, P., Saydjari, A.K., et al.

Astronomy & Astrophysics 2024, 685, A82

-

First spatio-spectral Bayesian imaging of SN1006 in X-ray

Westerkamp, M., Eberle, V., Guardiani, M., Frank, P., et al.

Astronomy & Astrophysics 2024, 684, A155

-

Attention to Entropic Communication

Enßlin, T.A., Weidinger, C., Frank, P.

Annalen der Physik 2024, 2300334

-

Introducing LensCharm – A charming Bayesian strong lensing reconstruction framework

Rüstig, J., Guardiani, M., Roth, J., Frank, P., Enßlin, T.A.

Astronomy & Astrophysics 2024, 682, A146

-

Inferring Evidence from Nested Sampling Data via Information Field Theory

Westerkamp, M., Roth, J., Frank, P., Handley, W., Enßlin T.A.

Phys. Sci. Forum 2023, 9(1), 19

-

Multi-Component Imaging of the Fermi Gamma-ray Sky in the Spatio-spectral Domain

Platz, L.I., Knollmüller, J., Arras, P., Frank, P., et al.

Astronomy & Astrophysics 2023, 680, A2

-

Butterfly Transforms for Efficient Representation of Spatially Variant Point Spread Functions in Bayesian Imaging

Eberle, V., Frank, P., Stadler, J., Streit, S., Enßlin, T.A.

Entropy 2023, 25(4), 652

-

Dynamical field inference and supersymmetry

Westerkamp, M., Ovchinnikov, I., Frank, P., Enßlin, T.A.

Entropy 2021, 23, 1652

-

Probabilistic Autoencoder using Fisher Information

Zacherl, J., Frank, P., and Enßlin, T.A.

Entropy 2021, 23, 1640

-

Bayesian decomposition of the Galactic multi-frequency sky using probabilistic autoencoders

Milosevic, S., Frank, P., Leike, R., Müller, A., Enßlin, T.A.

Astronomy & Astrophysics 2021, 650, A100

-

Reconstructing non-repeating radio pulses with Information Field Theory

Welling, C., Frank, P., Enßlin, T.A., and Nelles, A.

JCAP 2021, 04, 071

-

Non-parametric Bayesian Causal Modeling of the SARS-CoV-2 Viral Load Distribution vs. Patient's Age

Guardiani, M., Frank, P., Kostić, A., et al.

PLoS ONE 17(10): e0275011

-

Unified radio interferometric calibration and imaging with joint uncertainty quantification

Arras, P., Frank, P., Leike, R., Westermann, R., Enßlin, T.A.

Astronomy & Astrophysics 2019, 627, A134

-

NIFTy 3 – Numerical Information Field Theory: A Python Framework for Multicomponent Signal Inference on HPC Clusters

Steininger, T., Dixit, J., Frank, P., et al.

Annalen der Physik 2019, 531, 1800290

-

Sharpening up Galactic all-sky maps with complementary data – A machine learning approach

Müller, A., Hackstein, M., Greiner, M., Frank, P., et al.

Astronomy & Astrophysics 2018, 620, A64

-

The 3D Structure and Kinematics of the Local Disk-Halo Interface: Intermediate-velocity Clouds are the Minority of High-altitude Clouds in the Solar Neighborhood

O’Neill, T.J., Saydjari, A.K., Zucker, C., Koch, E.W., Benjamin, R.A., Frank, P., Yoshida, S.

arXiv:2605.24342, 2026

-

A Three-Dimensional Tomographic Reconstruction of the Galactic Cosmic-Ray Proton Density

Zandinejad, H., Roth, J., Minh Phan, V.H., Edenhofer, G., Frank, P., Mertsch, P., Kissmann, R., Ramírez, A., Söding, L., Enßlin, T.A.

arXiv:2605.22739, 2026

-

aim-resolve: Automatic Identification and Modeling for Bayesian Radio Interferometric Imaging

Fuchs, R., Knollmüller, J., Roth, J., Eberle, V., Frank, P., Enßlin, T.A., Heinrich, L.

arXiv:2512.04840, 2025

-

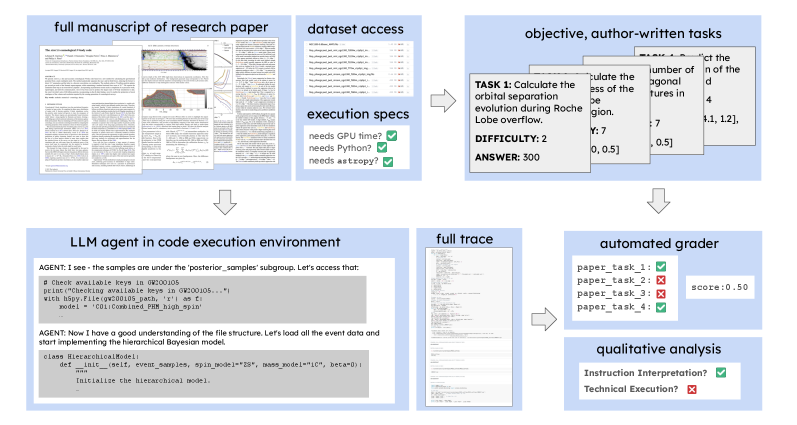

ReplicationBench: Can AI Agents Replicate Astrophysics Research Papers?

Ye, C., Yuan, S., Cooray, S., et al. incl. Frank, P.

arXiv:2510.24591, 2025

-

Information Field Theory with JAX infers Air Shower Electric Currents from Antenna Signal Traces

Straub, M., Enßlin, T.A., Erdmann, M., Frank, P., Zingler, M.

arXiv:2507.20555, 2025

-

Correlation as a Resource in Unitary Quantum Measurements

Johnson, V., Singh, A., Leike, R., Frank, P., Enßlin, T.A.

arXiv:2212.03829, 2025

-

Sparse Kernel Gaussian Processes through Iterative Charted Refinement (ICR)

Edenhofer, G., Leike, R.H., Frank, P., Enßlin, T.A.

arXiv:2206.10634, 2022

-

The Galactic 3D large-scale dust distribution via Gaussian process regression on spherical coordinates

Leike, R.H., Edenhofer, G., Knollmüller, J., Alig, C., Frank, P., Enßlin, T.A.

arXiv:2204.11715, 2022

-

Separating diffuse from point-like sources – a Bayesian approach

Knollmüller, J., Frank, P., Enßlin, T.A.

arXiv:1804.05591, 2018